10 Revelations from the Tech Engine That Powers Your 2025 Wrapped

Every year, Spotify Wrapped transforms raw listening data into a personalized story of your musical year. Behind that glossy, shareable summary lies a complex technological infrastructure that processes billions of streams, identifies listening patterns, and serves up insights in near real-time. For 2025, the engineering team has pushed boundaries even further, leveraging advanced machine learning, distributed systems, and novel data visualization techniques. Here are ten key technological pillars that make your Wrapped highlights possible, from the moment you press play to the final 'Your Top Genres' card.

1. The Global Data Firehose

Every second, millions of streams flow into Spotify’s data pipeline. The system ingests events from every device, including play count, skip rate, session length, and even offline listens. For 2025, engineers optimized stream processing using Apache Kafka and custom in-memory caches to handle peak loads without lag. This firehose is the raw material for all subsequent analysis.

2. Real-Time Personalization Models

Traditional Wrapped relied on batch processing, but the 2025 edition incorporates real-time personalization. Using TensorFlow-on-Spark, the team built models that update your listening profile continuously. This means your Wrapped can reflect your very last playlist shuffle, not just data up to a cutoff date. Learn more about the model training pipeline.

3. The 'Audio DNA' Embedding System

To categorize songs into meaningful moments, Spotify employs audio embeddings—numerical representations of a track’s acoustic features. The 2025 algorithm runs deep neural networks that extract tempo, key, mood, and even lyrics sentiment. These embeddings are then clustered to detect patterns like 'morning commute anthems' or 'late-night jazz sessions'.

4. Scalable Graph Databases for Social Listening

Wrapped now highlights shared listening with friends or family thanks to a Nebula Graph database. This system maps your listening network in milliseconds, identifying co-listeners, group playlists, and mutual discoveries. It’s how Spotify tells you 'You both streamed the same song at 3 AM'.

5. Distributed Training with Federated Learning

Privacy concerns led engineers to adopt federated learning for the 2025 Wrapped. Instead of centralizing your data, training occurs on your device. Only aggregated updates are sent to the cloud. This reduces data transfer by 70% while ensuring your Wrapped remains uniquely yours. See how privacy is guaranteed.

6. The 'Moment Detection' Algorithm

What makes a listening moment 'interesting'? The 2025 algorithm uses transformer-based sequence models to detect anomalies, such as a sudden spike in a genre or a repeated song during a specific week. These moments become the story beats of your Wrapped. Processing these sequences requires high-throughput Ray clusters.

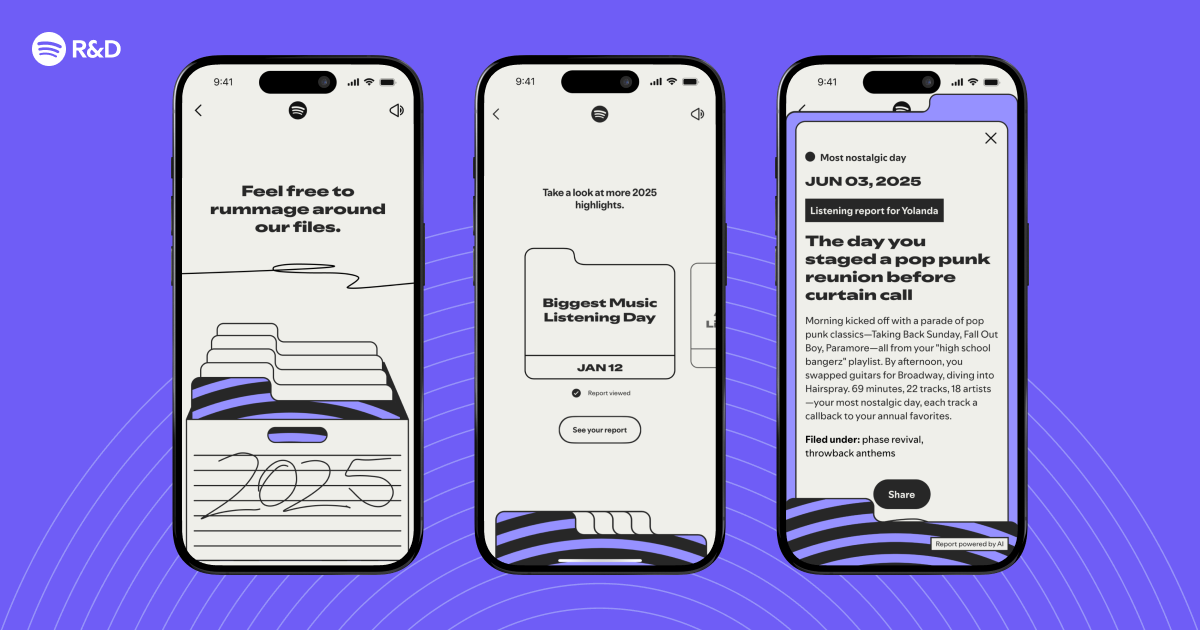

7. Dynamic Visualization Rendering

Each Wrapped story card is generated server-side using WebGL and a custom SVG engine. For 2025, the team introduced variable animations that change based on your data—faster beats for high-energy genres, softer gradients for ambient playlists. The rendering pipeline is optimized for mobile, delivering 60fps even on older devices.

8. A/B Testing at Scale

To ensure your Wrapped feels personal, Spotify runs hundreds of A/B experiments. Every model variant, from the embedding dimension to the threshold for 'top artist,' is tested across millions of users. The 2025 system uses Multi-Armed Bandit algorithms to automatically select the winning variant for your demographic. Learn about embeddings.

9. Privacy-Preserving Aggregation

Your Wrapped summary never exposes raw data. Aggregation occurs via a two-layer system: first on-device using differential privacy (adding random noise), then in the cloud with secure enclaves. Only general statistics like 'Top 0.1% of listeners' are revealed, not your exact play count. This design passed internal audits in early 2025.

10. The Final 'Share' Infrastructure

The share button triggers a dedicated microservice that renders your Wrapped as a 1080p video, a still image, or an interactive link. This service uses a queue-based architecture (RabbitMQ) to handle viral spikes—like when a new artist drops and millions share simultaneously. 99.9% uptime is maintained through auto-scaling on Kubernetes.

From data ingestion to social sharing, every component of the 2025 Wrapped tech stack is designed to deliver a seamless, insightful, and privacy-respecting experience. The magic you see on your screen is the result of years of engineering evolution. Next time you share your top five artists, remember the immense technological orchestra playing behind the scenes.

Related Articles

- Spotify Launches Verified Badge to Fight AI Impersonation in Music

- Apple Watch Set for watchOS 27 Overhaul with Simplified Modular Ultra Face, Report Claims

- The Bear's Surprise Prequel: 'Gary' Offers a Prequel Bite Before the Final Season

- Walmart’s Onn Google TV 4K Streaming Stick: The Affordable Chromecast Alternative That Delivers

- Forget the Dongles: Universal Apple Watch Cable Becomes Must-Have Travel Companion, Experts Confirm

- Spotify and Anthropic Unveil 'Agentic Development': AI Agents Redefining Software Engineering

- Why the Nomad Universal Apple Watch Cable Became My Must-Have Travel Companion

- Building Stable UIs for Real-Time Content Streaming