Mistral Launches Powerful Medium 3.5 Model and Cloud Agent Features in Le Chat

PARIS, France – Mistral AI today released Mistral Medium 3.5, a 128-billion parameter model engineered to master instruction following, advanced reasoning, and code generation within a single unified system. The company simultaneously rolled out new cloud-based autonomous agent capabilities across its Vibe and Le Chat platforms.

The new model consolidates three critical AI capabilities—instruction adherence, logical reasoning, and coding—that previously often required separate specialized models, promising streamlined deployment for developers and enterprises.

“Mistral Medium 3.5 bridges the gap between raw performance and practical usability, allowing teams to handle complex, multi-step tasks without juggling multiple model architectures,” said a Mistral AI spokesperson.

Background

Founded in 2023, Mistral AI quickly emerged as a European challenger to OpenAI and Anthropic, backed by prominent investors like Andreessen Horowitz and Lightspeed Venture Partners. The company gained early traction with its open-weight Mistral 7B and Mixtral 8x7B models, which offered competitive performance at lower computational costs.

Mistral Medium 3.5 is the latest iteration in the company’s mid-range family, boasting 128 billion parameters—a size that balances capability with inference efficiency. The model has been fine-tuned on multilingual data and optimized for tasks requiring deep reasoning chains, such as code debugging and complex analytical queries.

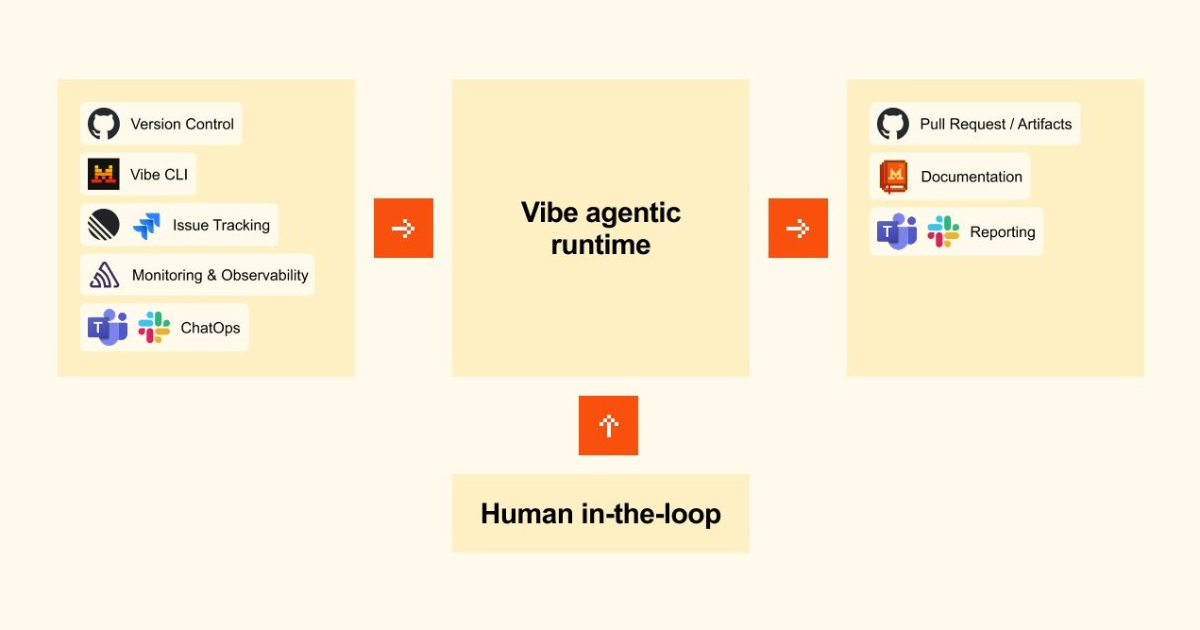

The new cloud agents, accessible through Vibe—a creative productivity suite—and Le Chat—Mistral’s conversational AI platform—enable users to deploy autonomous workflows. These agents can connect to external APIs, retrieve real-time data, and execute sequences of operations with minimal human intervention.

What This Means

For developers, Mistral Medium 3.5 offers a viable alternative to OpenAI’s GPT-4 and Anthropic’s Claude, particularly for applications that demand rigorous instruction following and robust code generation. The unified architecture reduces system complexity: one model handles reasoning, instruction, and coding tasks, simplifying API management and lowering latency.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

The introduction of cloud agents signals Mistral’s aggressive push into autonomous AI operations, directly competing with OpenAI’s GPTs and Anthropic’s tool-use features. Analysts view this move as a strategic effort to capture enterprise customers seeking flexible, self-hosted AI solutions.

“By combining a powerful mid-size model with cloud-native agent functionality, Mistral is democratizing access to sophisticated AI automation,” said Dr. Elena Marchetti, an AI analyst at Gartner. “The 128-billion parameter sweet spot allows businesses to achieve high performance without the prohibitive costs of frontier models.”

Industry observers also note that Mistral’s open-weight approach could accelerate adoption in regulated industries, where data privacy demands on-premises or hybrid deployments. The model is available immediately via Mistral’s API and within the Le Chat platform, with a lighter variant for edge devices expected later this quarter.

- Unified intelligence: Mistral Medium 3.5 integrates instruction following, reasoning, and coding in one model.

- Cloud agents: New autonomous capabilities in Vibe and Le Chat enable multi-step, API-connected tasks.

- Competitive positioning: Targets cost-sensitive enterprises needing advanced AI without the overhead of larger systems.

This story is developing. Additional details on pricing and availability are expected in the coming days.

Related Articles

- Apple Crime Roundup: iCloud Abuse, AirTag Stalking, and iPad Thefts

- How to Accelerate AI Development with Runpod Flash: A Step-by-Step Guide to Container-Free GPU Deployment

- 7 Key Factors in Choosing Azure Functions Hosting: From Consumption to Dedicated

- Empowering Multi-Tenant Platforms with Dynamic Durable Execution

- Mastering Cloud Cost Optimization: A Step-by-Step Guide for Sustaining Value Across Workloads

- How to Simplify Hybrid and Multicloud Connectivity with AWS Interconnect

- Kubernetes v1.36 Memory QoS: Tiered Protection and Better Control

- 10 Milestones of Docker Hardened Images: One Year of Security Innovation