AI Agent Revolution: How OpenAI's GPT-5.5 and NVIDIA Infrastructure Empower Enterprise Development

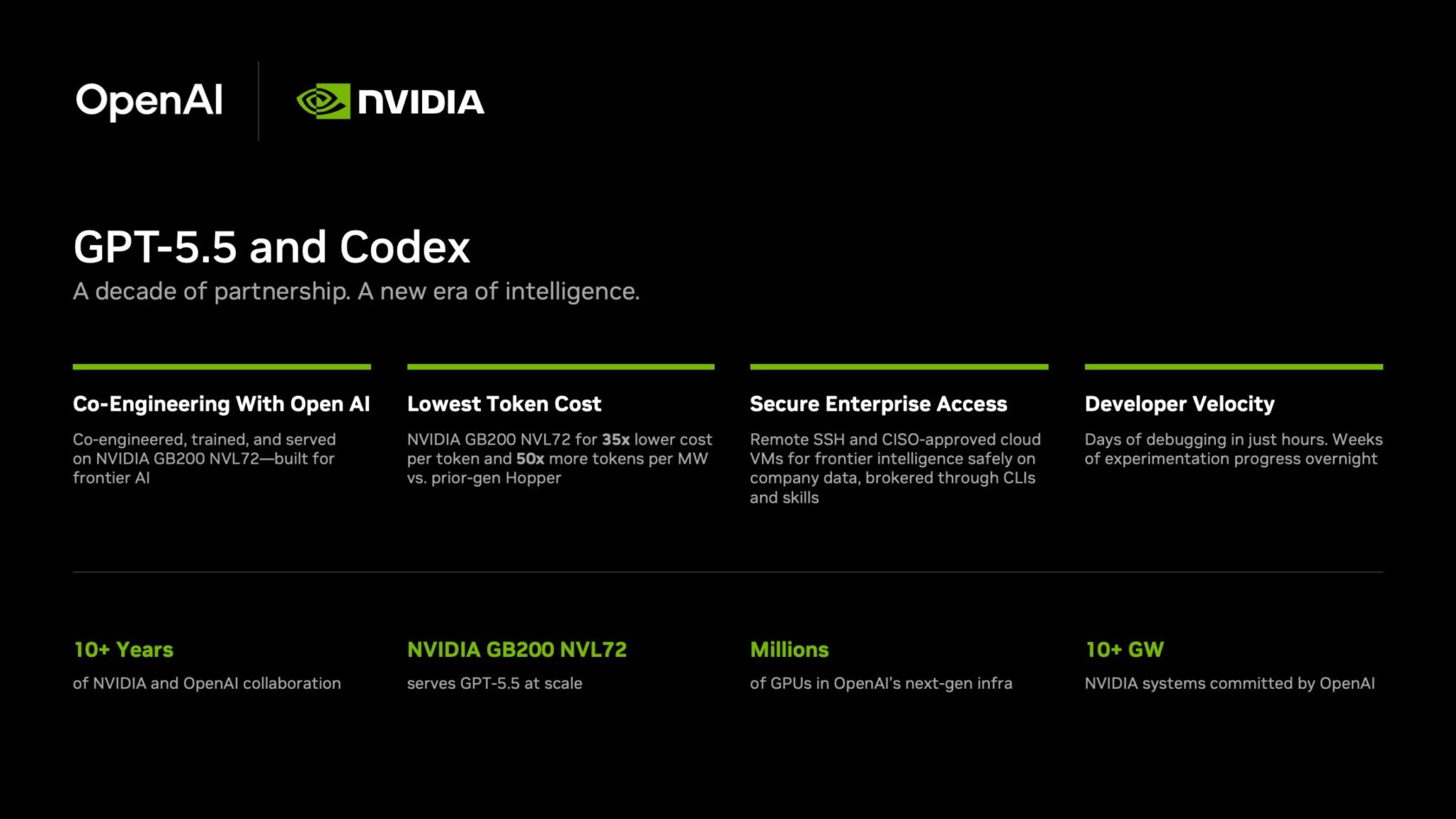

Artificial intelligence agents are reshaping how developers and knowledge workers approach complex tasks. The latest breakthrough combines OpenAI's advanced GPT-5.5 model with NVIDIA's powerful GB200 NVL72 infrastructure to power Codex, a coding application that accelerates everything from debugging to feature delivery. This integration is already yielding extraordinary results across thousands of NVIDIA employees, cutting development cycles from weeks to overnight and enabling natural-language-driven software creation. Below, we answer key questions about this transformative technology.

What is GPT-5.5 and how does it enhance Codex?

GPT-5.5 is OpenAI's most advanced frontier model, now serving as the engine behind Codex, an agentic coding application. Unlike earlier models, GPT-5.5 excels at multi-step reasoning, complex problem-solving, and context-rich code generation. It allows Codex to process entire codebases, understand dependencies across hundreds of files, and generate end-to-end features from natural-language prompts. This means developers can describe a desired feature in plain English, and Codex handles the implementation—writing, testing, and debugging code in real time. The model also supports secure enterprise operations through SSH connections and cloud virtual machines, ensuring sensitive data remains protected. With GPT-5.5, Codex has become a genuine coding partner, shifting developers from manual coding to high-level oversight and innovation.

What hardware powers GPT-5.5 and why is it significant?

GPT-5.5 runs on NVIDIA's GB200 NVL72 rack-scale systems, a purpose-built infrastructure for frontier model inference. The GB200 NVL72 delivers a 35x reduction in cost per million tokens and 50x higher token output per second per megawatt compared to previous-generation systems. These economics make it viable to deploy advanced AI at enterprise scale, where thousands of users simultaneously interact with the model. For NVIDIA, this means lower operational costs and faster response times. The system's architecture also supports efficient power usage, aligning with sustainability goals. By optimizing both cost and speed, the GB200 NVL72 enables Codex to handle real-world enterprise workflows without compromising on performance or budget.

What measurable benefits have NVIDIA employees seen with Codex?

Over 10,000 NVIDIA employees across departments—including engineering, legal, marketing, finance, and HR—are using GPT-5.5-powered Codex. The results are dramatic: debugging cycles that once took days now close in hours. Experimentation that required weeks is completed overnight. Teams are shipping end-to-end features directly from natural-language prompts with higher reliability and fewer wasted cycles. Users describe the experience as "mind-blowing" and "life-changing". For instance, complex multi-file codebase changes that previously required coordinated efforts across multiple developers can now be handled by a single engineer with Codex's assistance. This acceleration doesn't just improve productivity; it allows teams to iterate faster, explore more ideas, and focus on creative problem-solving rather than repetitive coding tasks.

How does NVIDIA ensure security and enterprise compliance with Codex?

Security is paramount for enterprise AI deployments. NVIDIA's Codex implementation uses a zero-data retention policy—no user data is stored beyond the immediate session. Agents connect to company production systems via remote Secure Shell (SSH) to approved cloud virtual machines (VMs). These VMs act as sandboxes, keeping sensitive data within the enterprise boundary. Each employee gets a dedicated cloud VM with full auditability, meaning every action the agent performs is logged and traceable. Agents access systems with read-only permissions through command-line interfaces and the same agentic toolkit (Skills) that NVIDIA uses for internal automation workflows. This architecture ensures that while Codex can analyze and act on real company data, it never exposes that data externally or makes unauthorized changes.

What is the history of collaboration between NVIDIA and OpenAI?

The partnership between NVIDIA and OpenAI spans over a decade. It began in 2016 when NVIDIA founder Jensen Huang personally delivered one of the first DGX-1 systems to OpenAI. Since then, the companies have worked closely on AI infrastructure, model optimization, and deployment strategies. The GPT-5.5 launch and Codex rollout are the latest milestones in this ongoing collaboration. NVIDIA's involvement goes beyond just running OpenAI's models—it extends to co-developing the most cost-efficient and power-effective systems for frontier AI. This partnership exemplifies how hardware and software leaders can together push the boundaries of what's possible. As Jensen Huang told employees in a company-wide email: "Let's jump to lightspeed. Welcome to the age of AI."

Related Articles

- 10 Crucial Insights into Adversarial Attacks on Large Language Models

- OpenAI Deploys Enhanced Security Protocol for ChatGPT: Multi-Factor Authentication and Session Limits Now Live

- Exploring Elon Musk confirms xAI used OpenAI’s models to train Grok

- 7 Essential Insights for Testing Code You Didn't Write

- How Meta's Adaptive Ranking Model Revolutionizes Ad Serving at Scale

- 8 Essential Strategies for Testing Code You Didn't Write (and Can't Predict)

- How to Transition from LangChain to Native Agent Architectures for Production AI Systems

- Mastering ChatGPT: The Optimal Setup for Accurate, Context-Aware Responses